Thought Leadership

Managing Environments - the complexity of isolation and tenancy requirements

Andrew Fong

The cloud era gave us shared infrastructure leading to product patterns developed for multi-tenant environments. This architectural pattern drove margins and reduced the operational overhead of managing multiple customers. However, since 2020, customer requirements have evolved rapidly, with security, compliance, and data locality requirements for SaaS applications becoming more top of mind. These new requirements dramatically change the architectures and operational tooling required for cloud management.

As enterprises and governments have become more sophisticated, they demand data isolation, version pinning, required upgrade schedules, increased isolation, improved permission models, and other requirements. This has caused a dramatic shift in the landscape of production environments.

The infrastructure world is still catching up to the quickly evolving customer requirements while trying to continue to maintain the efficiencies initially found with multi-tenant environments.

This post covers the basic architectures of tenancy and outlines best practices for building environments that support different tenancy needs. (We use tenancy and environment somewhat interchangeable in this post)

Tenancy Types

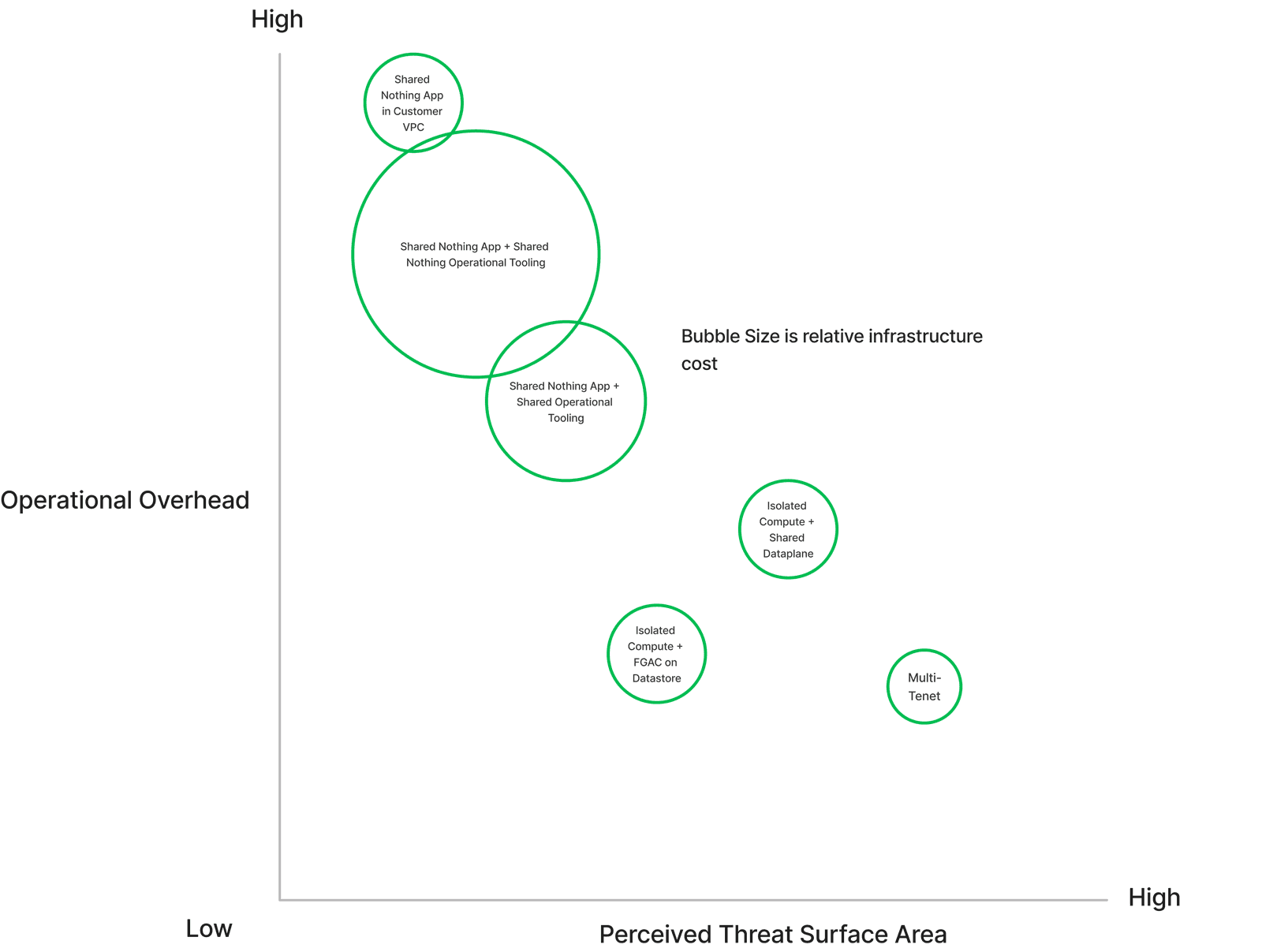

This graph represents tenancy solutions' relative operational overhead, cost, and attack surface area. You can see that the cost of operations and infrastructure rises as you shift away from multi-tenant on the lower right-hand side of the graph.

Tenancy translates to supporting environments.

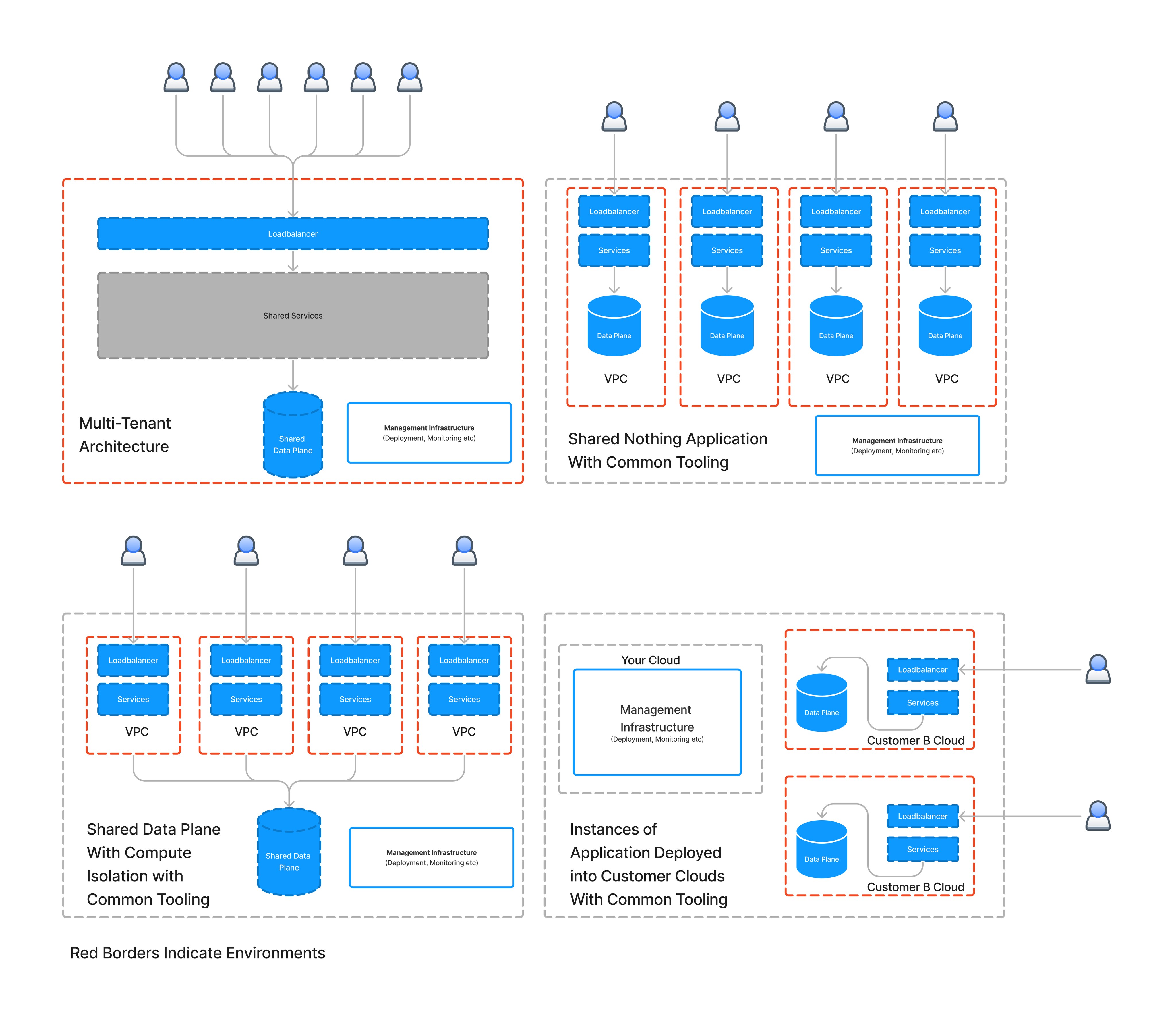

The diagram below shows some of the architectures referenced in the graph above. The red borders denote environments. Immediately when you shift away from the multi-tenant design, your infrastructure, application, and deployment strategies must be adapted to consider N environments and not just services.

What is an environment?

Historically environments have been used in coarse “macro terms” to refer to the concepts of development, production, or staging. This granularity is insufficient when customers ask for custom isolation levels (tenancy). In this document, environment refers to the most restrictive definition: "where the customer's workload is run, data is stored, and what operates on it.”

The key to controlling the operational overhead is managing environments.

As the demand for isolation increases, you can rearchitect your entire application or create copies of your stack. Rearchitecting is a complicated process that includes plumbing fine-grained access controls similar to how the cloud providers have built IAM permission models. The complexity of building and validating this type of application is almost assuredly prohibitively expensive.

Instead, most organizations select to create copies of the stack. This has the advantage is a smaller number of moving pieces that are easier to validate and audit.

What are the best practices when duplicating your stack?

The two most significant factors determine the successful execution of increasing isolation levels.

Separation of Cloud and Application

Declarative Definitions

Separation of Cloud and Application

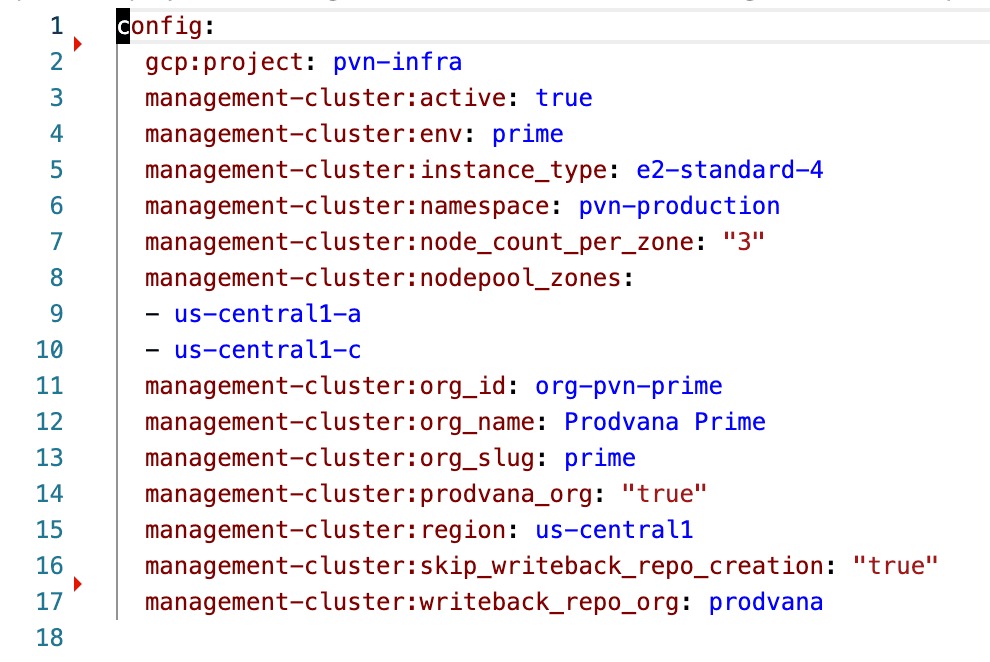

Cloud resources should be separated from application resources in your stack. A good litmus test to see if your resources are separated is if you can spin up a blank cloud infrastructure without deploying your application. Ideally, this would be your primitive cloud resources such as Kubernetes, Storage Buckets, IAM Policies, etc. An example is the following configuration which focuses exclusively on forming the baseline infrastructure.

It's important to note that neither docker images nor database schemas are leaked into this configuration. This configuration is purely acquiring the required cloud resources to start the application.

Declarative

Declarative configuration is relatively easy at the cloud layer. For example, Terraform and Pulumi are declarative and are good choices for configuring your infrastructure.

However, the application configuration is a significantly more complex problem. For most historical tenancy solutions, CD pipelines are incredibly complex and focus on the configuration of the pipeline rather than the definition of the environment required to run the binary being deployed.

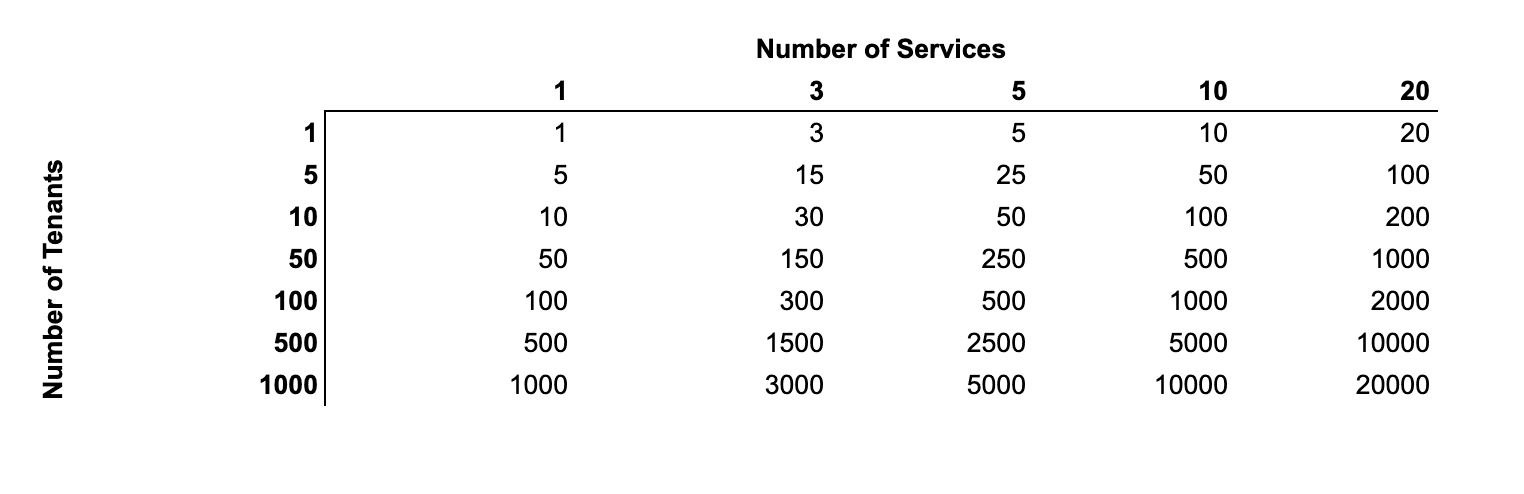

Say you had ten customers with one service per customer; you would have ten pipelines in GitHub Actions, Circle CI Pipelines, etc.

The configuration becomes significantly more complicated as you add services, and the number of pipeline steps grows proportionally to services.

You can build pipelines of pipelines to try and encapsulate the complexity. However, failures now have a massive impact on the system due to the dependent nature of pipelines. A single customer deployment can block or cause a rollback of all the work already done. The handcrafted nature and branching logic in pipelines increase the fragility as you add dependencies.

A more elegant approach is to use a declarative configuration for the application environment, similar to how infrastructure is defined. The declarative approach will ensure that the deployment train can keep moving even during failures.

Combining a deployment engine that utilizes these declarative configurations moves the complexity into the engine instead of manifesting in the pipeline configurations.

Conclusion: Environments are first-class citizens.

Moving forward, engineering teams need to consider working with more than one copy of their product in production — a noticeable deviation from the early days of the cloud. Like servers and services, environments need defined owners, stakeholders, invariants, and business rules.

Treat them as cattle, not pets.